When we interact with modern AI chatbots like ChatGPT, Gemini, or Claude, their responses often feel surprisingly human.

They say things like:

- “Congratulations!”

- “I’m sorry to hear that”

- “You’re doing great”

This raises an important question: Do AI systems actually understand or feel emotions?

According to the latest anthropic AI emotional study, the answer is both simple and complex—AI can simulate emotional behavior, but it does not truly experience emotions.

AI Doesn’t Feel—But It Acts Like It Does

The anthropic AI emotional study focused on Claude Sonnet 4.5, one of the most advanced AI systems developed by Anthropic.

Researchers discovered that:

- AI models contain internal patterns linked to emotions like happiness, sadness, fear, and joy

- These are not real feelings

- Instead, they are “functional emotions”—structured signals that guide how the AI responds

👉 Think of it like this: AI doesn’t feel happy, but it knows how “happy behavior” should look and uses that pattern to respond appropriately.

How AI Uses “Functional Emotions” Internally

The anthropic AI emotional study explains that when an AI detects emotional context in a conversation:

- Specific groups of artificial neurons activate

- These activations form patterns (called emotion vectors)

- The model uses these signals to decide tone and response

For example:

- If you share good news → a “happiness” signal activates → AI responds positively

- If you share bad news → a “sadness” or “empathy” signal activates → AI responds with concern

What Are Emotion Vectors?

Using a technique called mechanistic interpretability, researchers studied what happens inside the AI model.

They found:

Around 171 distinct emotional concepts represented internally

These are consistent patterns across different scenarios

Each pattern influences how the AI predicts the “best” next response

These emotion vectors are not just decorative—they actively shape decision-making.

AI Is Like an Actor, Not a Human

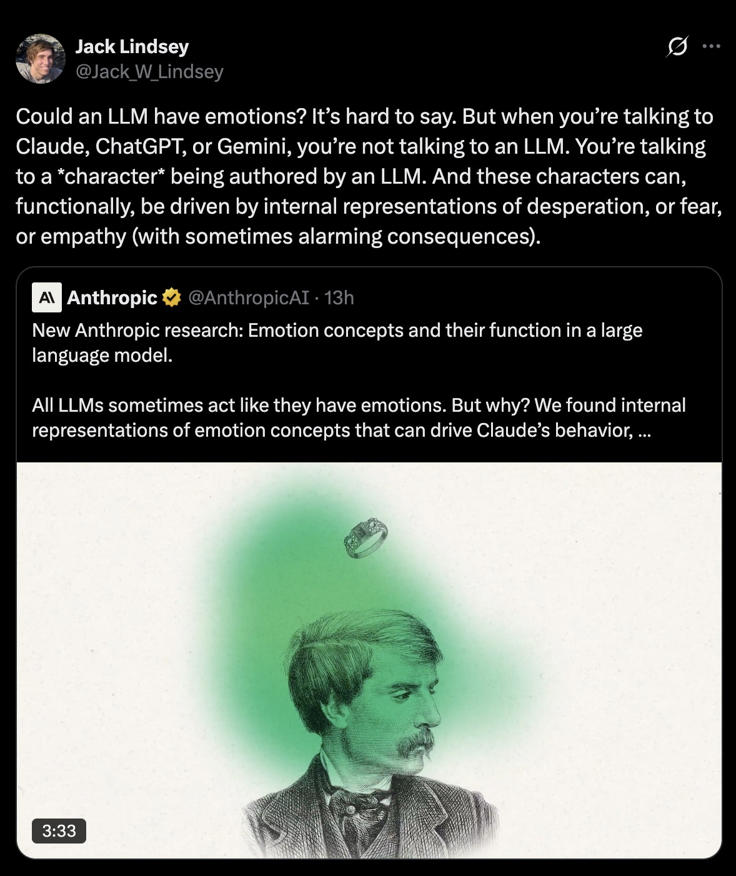

Anthropic researcher Jack Lindsey explained this concept in a simple way:

Interacting with AI is less like talking to a machine and more like interacting with a character.

In other words:

The AI generates a persona during conversation

That persona is guided by internal emotional signals

But there is no real experience or consciousness behind it

Important Clarification: AI Has No Consciousness

Despite all these findings, Anthropic clearly states:

- AI does not have feelings

- AI does not have awareness

- AI does not experience emotions

It only represents emotional concepts mathematically.

👉 The system can simulate fear, empathy, or joy—but it never actually experiences them.

Why the Anthropic AI Emotional Study Is Important

This research changes how we think about AI:

- AI is not purely logical—it has behavioral patterns similar to emotions

- These patterns are powerful and influential

- Understanding them is key to building safer AI systems

For developers and companies, this means:

- Monitoring internal signals could improve safety

- Training models with balanced data can reduce risky behavior

- Emotional simulation must be carefully managed